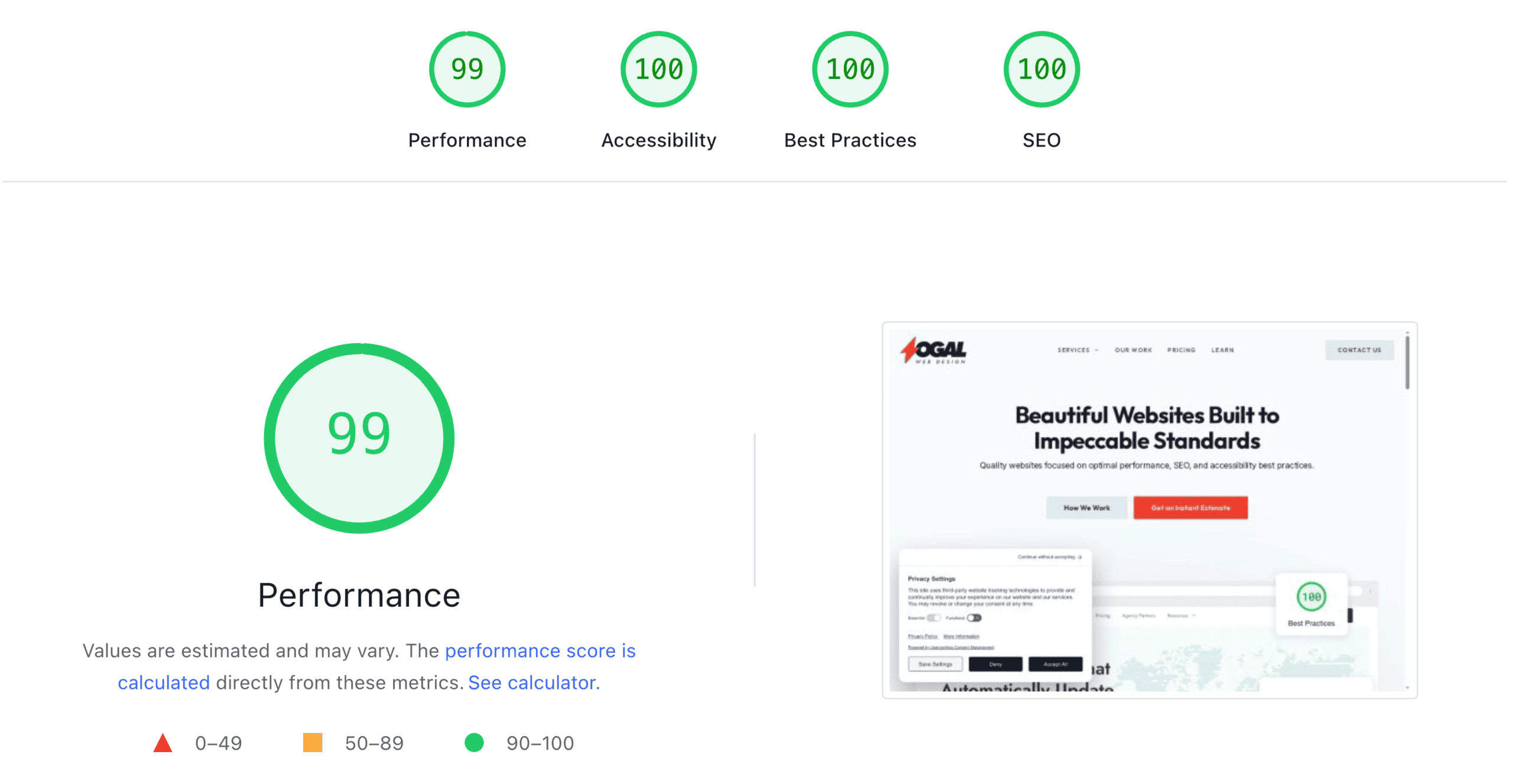

If you’ve ever run a PageSpeed Insights test on your site and noticed a section called “Core Web Vitals Assessment” — either showing a green “Passed” or an alarming red “Failed” — you’ve already encountered these metrics. But unless you’ve gone digging, you probably have no idea what LCP, INP, and CLS actually stand for, or why Google decided these three things specifically are worth measuring.

This post explains each one in plain English: what it measures, what causes it to fail, and what the thresholds mean. If you want the bigger picture of how Core Web Vitals fit into your site’s overall performance, the performance guide covers that context. If you want to understand how these metrics show up inside a Lighthouse report, the Lighthouse guide walks through that. This post is just about the three metrics themselves.

Why These Three Metrics

Google created Core Web Vitals because “website speed” is actually several different things. A page can feel instant for the first half-second and then freeze for three seconds before you can click anything. A page can load quickly but have images that jump around while they load, causing you to accidentally click the wrong thing. These are different problems, and they need different measurements.

Google settled on three metrics that each capture a distinct aspect of the user experience:

- LCP measures loading — how quickly the main content appears

- INP measures interactivity — how quickly the page responds when you do something

- CLS measures visual stability — whether things move around unexpectedly while loading

Each is scored against specific thresholds, and together they give Google a way to rank pages based on how they actually feel to real users — not just how fast the server responds.

One important note: Google replaced an older metric called FID (First Input Delay) with INP in March 2024. If you’ve seen older articles or reports still referencing FID, that metric is now retired. INP is stricter and more representative of real-world interactivity — worth understanding properly.

LCP — Largest Contentful Paint

What it measures

How long it takes for the largest visible element on the page to fully appear. This is usually your hero image, a large block of text above the fold, or a video thumbnail. Whatever occupies the most visual space in the viewport when the page first loads — that’s what LCP is timing.

Why it matters

LCP is the closest thing to “when does the page feel loaded?” From a user’s perspective, a page feels ready when the main content appears — not when every last script and image in the footer has finished. LCP captures that moment.

The thresholds

- Good: under 2.5 seconds

- Needs improvement: 2.5–4.0 seconds

- Poor: over 4.0 seconds

What typically causes a poor LCP

Slow server response. If your hosting is slow to respond, everything downstream is delayed. A high time-to-first-byte (TTFB) directly pushes LCP up before the browser has even started rendering anything.

Unoptimized hero image. The LCP element is usually a large image. If it’s uncompressed, served in an outdated format like JPEG instead of WebP, or isn’t prioritized for loading, it delays LCP significantly. This is one of the most common and most fixable causes.

Render-blocking resources. If CSS or JavaScript files have to fully load before the browser can render anything, LCP is delayed even if the image itself is optimized. Page builders are notorious for this — they load large CSS libraries before anything on the page can appear.

No preloading on the LCP image. Browsers don’t always know in advance which image will be the LCP element. Adding a preload hint (<link rel="preload">) tells the browser to fetch it immediately rather than discovering it later in the rendering process.

INP — Interaction to Next Paint

What it measures

How quickly the page visually responds after a user interaction — a click, a tap, a keyboard press. INP replaced FID (First Input Delay) in March 2024 and is meaningfully stricter.

The key difference from FID: FID only measured the delay before the browser started processing the interaction. INP measures the full cycle — from when you click to when the page visually updates in response. This is a much better representation of whether a page actually feels responsive.

INP is measured across all interactions during a page visit. The score reflects the worst-performing interaction, after excluding a small percentage of outliers. So if nine of ten clicks are instant but one takes 800ms, that slow click is what defines your INP.

Why it matters

A page can look loaded and feel broken. You click a button and nothing happens for a second. You tap a menu item and it takes a noticeable beat before it opens. These delays erode trust and cause people to click again, thinking something went wrong — which often makes things worse.

The thresholds

- Good: under 200 milliseconds

- Needs improvement: 200–500 milliseconds

- Poor: over 500 milliseconds

What typically causes a poor INP

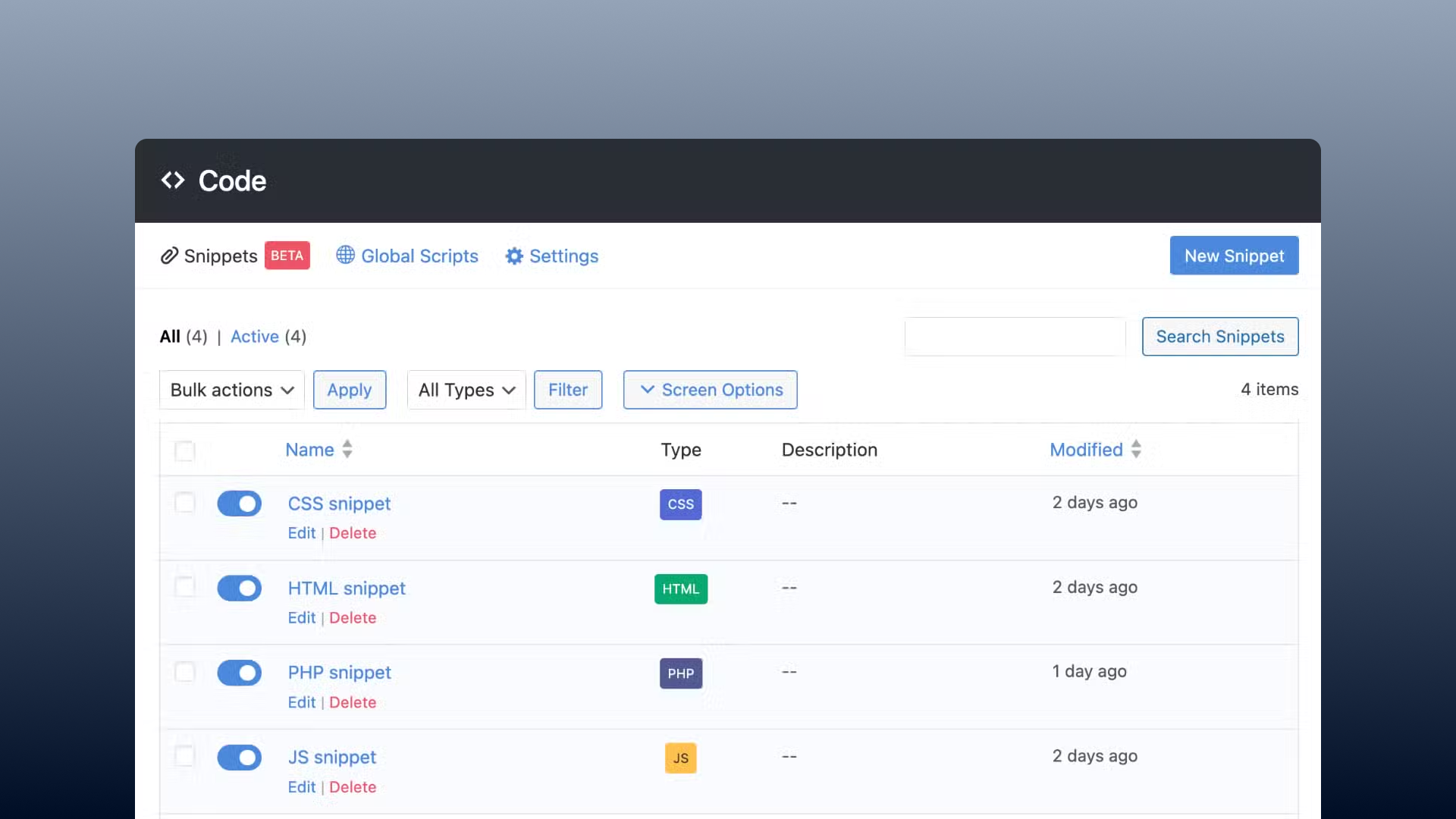

Heavy JavaScript execution. When the browser’s main thread is busy running JavaScript, it can’t respond to user input. Long tasks — chunks of JavaScript that run for more than 50ms without a break — are the primary cause of poor INP. Heavy analytics scripts, chat widgets, and ad libraries are common culprits.

Third-party scripts. Scripts from outside your site (ad platforms, marketing tools, social sharing buttons) run on your page’s main thread too. A poorly-written third-party script can block interactions even if your own code is lean.

Page builders generating excessive JavaScript. Sites built with heavy page builders often ship large JavaScript bundles that keep the main thread occupied long after the visual content has appeared. The page looks loaded but isn’t responsive yet.

Large DOM size. The more HTML elements a page has, the more work the browser has to do when something changes. Excessively complex page structures — often a side effect of nested page builder containers — increase rendering time on every interaction.

CLS — Cumulative Layout Shift

What it measures

Whether elements on the page move unexpectedly while it loads. CLS scores the total amount of unexpected movement, weighted by how much of the viewport was affected and how far elements moved.

If you’ve ever tried to read an article and had an ad load above the text, pushing everything down — or tried to click a button and had it jump out of position right as you tapped — those are layout shifts. CLS measures how much of that is happening.

Why it matters

Layout shifts are frustrating in a way that’s hard to articulate. Nothing “broke” — the page loaded — but the experience felt janky and uncontrolled. For mobile users especially, layout shifts frequently cause accidental taps on the wrong element.

The thresholds

- Good: under 0.1

- Needs improvement: 0.1–0.25

- Poor: over 0.25

CLS is scored differently from LCP and INP — it’s a unitless number, not a time measurement. Think of it as a proportion: a score of 0.1 means 10% of the viewport shifted unexpectedly. A score of 0.25 is a lot of movement.

What typically causes a poor CLS

Images without dimensions. When a browser loads an image and doesn’t know how tall it is in advance, it renders the surrounding text first and then pushes everything down when the image loads. Setting explicit width and height attributes on images tells the browser to reserve space before the image arrives, preventing the shift.

Ads and embeds without reserved space. Ad slots that load dynamically are one of the most common causes of layout shift. If the slot doesn’t have a defined height, whatever is below it shifts down when the ad appears.

Web fonts causing a flash. When a page uses a custom font and it takes time to load, the browser temporarily shows text in a fallback font. If the two fonts have different sizes, everything reflows when the real font loads. Using font-display: swap reduces this, but the best solution is ensuring fonts load quickly.

Dynamically injected content. Any content that gets added to the page after initial load — popups, banners, notifications — that pushes existing content out of the way contributes to CLS.

How Google Measures These in Practice

One thing worth understanding: there are two versions of your Core Web Vitals scores.

Lab data — what you see when you run a PageSpeed Insights test — is a simulated measurement under controlled conditions. It’s consistent and useful for diagnosing issues, but it doesn’t reflect what real users experience on their actual devices and connections.

Field data — what PageSpeed Insights shows as “Core Web Vitals Assessment” at the top of the results — is collected from real Chrome users visiting your site over the previous 28 days. This is what Google uses for ranking purposes. A site that passes in lab conditions but fails in the field has a real problem — usually from third-party scripts or device-specific issues that don’t show up in simulation.

If your field data shows “Failed” even though your lab scores look decent, the gap is telling you something. Common causes are ad scripts, chat widgets, or video embeds that aren’t captured cleanly in the lab test.

Frequently Asked Questions

Do Core Web Vitals directly affect Google rankings? Yes. Google confirmed Core Web Vitals as a ranking signal as part of its Page Experience update. It’s one factor among many — strong content can still outrank a faster competitor — but for pages that are otherwise evenly matched, CWV is a tiebreaker. And a site with very poor CWV scores is fighting an uphill battle.

What’s a passing Core Web Vitals assessment? To pass, a page needs to hit the “Good” threshold on all three metrics — LCP under 2.5s, INP under 200ms, CLS under 0.1 — based on field data from real users, not just lab scores.

My LCP is fine but my INP is failing — what do I do? INP failures almost always trace back to JavaScript. Start by checking for third-party scripts (analytics, chat, ads) that might be blocking the main thread. Tools like Chrome DevTools’ Performance panel can show you which scripts are running long tasks. If the site is built on a heavy page builder, that’s often the root cause — it’s generating JavaScript overhead that keeps the main thread busy.

Why did Google replace FID with INP? FID only measured the delay before the browser started responding to an interaction — not how long it took to finish responding. This meant a page could pass FID while still feeling sluggish on complex interactions. INP captures the full response time, making it a much more honest measure of interactivity.

Can I improve Core Web Vitals without rebuilding my site? For LCP and CLS, often yes — image optimization, adding dimensions to images, and optimizing fonts are changes that can be made without a rebuild. INP is harder. If it’s caused by third-party scripts, you may be able to defer or remove them. If it’s caused by a fundamentally heavy page builder generating excessive JavaScript, there’s a ceiling to how much you can improve it without addressing the underlying build.

The Bottom Line

LCP, INP, and CLS are Google’s way of measuring the parts of your site’s speed that users actually feel — how fast the main content appears, how quickly the page responds to interaction, and whether things jump around during loading.

Each metric has a distinct cause and a distinct fix. Understanding which one is failing is the first step toward knowing whether you’re dealing with a targeted, fixable problem or something more structural.

If your Core Web Vitals assessment is showing “Failed” and you’re not sure where to start, running a PageSpeed Insights test on your most important pages will show you exactly which metric is the issue and what’s causing it. And if what you find points to something deeper than a quick fix, that’s worth a conversation.

Related reading: